SAP Datasphere and SNP Glue

In various blog posts we have looked at how customers can leverage SNP Glue in tandem with cloud-based data warehouses and data lakes to get the most of their SAP data. SNP Glue helps customers unlock the SAP data silo with a highly performant integration solution. For example, it is possible to stream SAP data with SNP Glue’s state-of-the-art delta capture (CDC) in near-real time to a cloud-based data platforms.

Your contact

Götz Lessmann

Managing Director & CTO DEV OWN

Share

Mostly, customers are asking today about integration with cloud-based data warehouses from the usual suspects: Hyperscalers and Snowflake. However, this may be a bit short sighted. When dealing with SAP data it is definitely worthwhile to look at what SAP itself has to offer. With the 2023 re-vamp of Data Warehouse Cloud (DWC) into Datasphere with new features and an ambitiously large scope there is a highly interesting offering available which warrants more attention.

There may some reasons for the short-sightedness when comparing solutions, after all, SAP has unfortunately managed to spread confusion about reporting and data warehousing, even in the early days of SAP HANA and Hasso Plattner himself told customers that with HANA you will not need SAP BW anymore (and by the way, does anybody remember how HANA Vora fit into that picture?).

Today, SAP BW (BW/4HANA) is going strong, and SAP has transformed itself into a cloud company to some degree (obviously, many customers need time to transform themselves, and still follow their age old on-premises strategies when it comes to their core business systems). With this in mind, let’s take a look at the cloud capabilities from SAP when it comes to data warehousing and reporting!

Some years ago, SAP has introduced DWC, the Data Warehouse Cloud. With the last release SAP extended this into the Datasphere product. This is not just a revamped SAP BW on steroids, but a much more modern and ambitious development. For example, one of the traditional weaknesses of SAP BW has always been that it is notoriously difficult to find talent on the job market to actually implement it. The reason is that you need a unique profile of business knowledge, data knowledge, and coding in SAP’s own programming language called ABAP. Without this mix, your implementation could not be adapted to your own business in terms of data modelling and data transformation during the ingestion into SAP BW.

With SAP’s Datasphere you can use the Data Scientist’s language of choice -- Python -- to implement these transformations. This is obviously much more modern and scalable. And hey, even ABAP dinosaurs such as myself would love to see a Python compiler integrated into the Netweaver ABAP stack as a side car to the ABAP engine -and in saying this, maybe somebody in Walldorf will read this and start thinking (wink, wink).

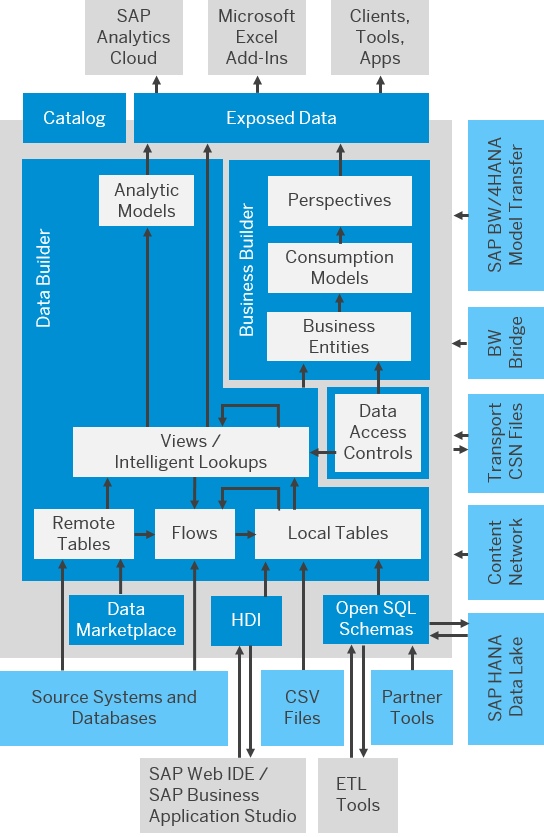

Datasphere offers what you would expect from a modern data warehouse: data storage, data catalog features, even data self-service for employees. It offers multiple connectors to other cloud or on-prem sources. But the most important are those from the “SAP” category where customers have a lot to choose from. It offers everything from an ABAP type of connection, through BW or BW4 models to S4/HANA Cloud offerings. Besides a common “pull” approach available in the connections, third-party ETL tools can leverage Open SQL schemas to write data directly into a data layer in the Datasphere tenant.

Datasphere uses SAP’s own HANA in-memory database which – being column-based – obviously guarantees stellar performance on reporting. With modern hardware the traditional limitations in terms of memory and storage are not really an issue anymore, and clearly with business data from ERP only you will not build data lakes of hundreds of terabytes in any case. Great performance in combination with SAP HANA features such as data federations (HANA views) are clearly more important in that regard.

SAP Datasphere is not meant to be a final data consumption platform though. It relies on the SAP Analytical Cloud (SAC) or other third party front -end technologies. The overall architecture is illustrated in the diagram below.

One of the key features, which are very different from traditional SAP BW is Data Marketplace, which allows you to leverage the true power of cloud. It is meant for three use cases:

- Internal Data Sharing

This allows you to rethink your data warehousing strategy. Each part of the organization can act as a separate data creator and provider (e.g. finance, marketing procurement etc.) and can decide which data to share and how. As a result, the complete process of data sharing is decentralized and therefore more agile. Also it is much easier to assign costs from the controlling perspective. This reminds me of good old OOP concepts such as encapsulation and inheritance (sigh). The same concept is used for Private and Public Data Sharing.

- Private Data Sharing

You may easily share your data with a daughter company or headquarters, the same way you are sharing it internally. Again an elegant way of dealing with a difficult problem, while ensuring simplicity, security and compliance.

- Public Data Sharing

On top, there are more than 3.000 data products from 100+ Data Providers, which can be easily consumed –weather data, stock market information or population statistics are just a click away (and of course you have to pay some money for that 😉) And yes, in theory you can even sell your data there to other companies.

To simplify and accelerate the implementation and transition to Datasphere, SAP has the “Datasphere, BW Bridge”. This is a technology which customers can use to gradually migrate from existing SAP BW solutions to Datasphere. SAP promises the reuse of SAP BW data models, customization, and data of up to 80% of the SAP BW objects in scope. Obviously, some exotic or legacy data types of BW InfoProviders will not be migrated automatically, but these would need to be tidied up and “renovated” by SAP BW customers eventually anyway.

Technically, the BW Bridge is running in a sperate cloud tenant, but shares data with the Datasphere Tenant. Being built on top of SAP BW/4HANA (but with slightly changed features) the BW Bridge sits between the legacy BW system and cloud solutions. You can use this as a staging and pass-through during the migration and transition. With its integration into SAP’s BTP cloud solution, this is not only a consequent move from SAP, but also offers customers two important benefits:

- It allows the use of SAP ABAP during the transition

- More importantly, it may offer customers a very cool option for a test drive of SAP BTP and its unique capabilities.

There are a couple of caveats, though. First of all, the BW Bridge will consume additional “Capacity Units” (CU), i.e. the SAP cloud currency. Like with all cloud providers, pricing of consumption-based applications can be a bit tricky because before you use them you won’t know how much you will really consume. Second, the SP BW Bridge is based on the SAP Business Warehouse, BUT you will not be able to run queries. The Bridge is only able to execute the data management capabilities of the good old SAP BW. Also, the BW Bridge can handle only ODP connectors. While this makes sense in a way, this means that not even the ingestion of files is possible.

Now, the obvious question from the SNP perspective: how does SNP Glue fit into this picture? There are multiple instances where Glue is very helpful in the transition, but also in the day-to-day operation of this technology:

- You can use SNP Glue to migrate legacy SAP BW data in a “one hop” scenario from your legacy SAP BW system (or even multiple BW systems!) into Datasphere in one go.

- More importantly, you can use SNP Glue to extract data from SAP Netweaver based systems or from SAP’s cloud solutions and stream data into Datasphere on an ongoing basis. This is what we call the “one hop” scenario. To some degree, this scenario may help customers transition without the need for the BW Bridge as a “man-in-the-middle”.

- The same is true for non-SAP data sources. You can, for example, stream data from your Salesforce into Datasphere to build reporting and data science scenarios which include heterogenous data from various solutions and even external data sources.

- In a “double hop” scenario, customers can leverage Datasphere to collect SAP business data, process it, filter it, enrich it, and pass the result on to a more global data lake based on a Hyperscaler, new emerging technologies like Snowflake or proven big data data platforms such as Cloudera (CDP). An example for such a data lake would be blending asset and plant maintenance data with non-SAP data sources such as sensor data feeds to implement predictive maintenance scenarios.

With SNP Glue, customers have the choice between running this as a pure SAP Addon on their SAP Netweaver systems and the new SNP Glue cloud features,which is currently at the time of writing in ramp-up with pilot customers.

Lastly, SAP offers no solution for migration a cold data store (e.g. NLS) to the new cloud-based world. With SNP’s Outboard suite which covers data management and archiving for SAP ERP and BW, it is possible to expose such archive data to any data lake or data warehouse technology, be it SAP’s own Datasphere or any other (maybe more inexpensive) cloud store or even SQL database.

Your contact

Götz Lessmann

Managing Director & CTO DEV OWN